Dr. Edoardo Persichetti. Source: TechGaged

Exclusive: Dr. Persichetti Challenges Key Claims in Cointelegraph’s Quantum Crypto Analysis

In Brief

- • Quantum threats target specific legacy cryptography, not all systems.

- • NIST-approved post-quantum schemes are rigorously tested, not guesses.

- • Delaying migration increases risk due to “harvest now, decrypt later” attacks.

Recently, Cointelegraph published an article titled ‘Nobody knows if quantum secure cryptography will even work’, suggesting that the dirty secret of quantum signatures is an unsolvable catastrophe for blockchain. While the timeline for quantum migration is a legitimate concern, the piece unfortunately conflated theoretical risks with established cryptographic facts.

To separate the signal from the noise, we spoke with Dr. Edoardo Persichetti, FAU Associate Professor, co-author of the NIST-standardized HQC, General Chair of EUROCRYPT 2026, and advisor at qLABS.

Dr. Persichetti is far from an observer in the world of post-quantum cryptography. In fact, he is one of its primary architects. As a co-author of an actual standard and the chair of the world’s most prestigious cryptography conferences, his insights come from the literal front lines of the math that will soon secure the global economy.

Being the expert here, he breaks down why the so-called uncertainty narrative is technically misleading and how it can encourage wrong policy conclusions.

On the specificity of the quantum threat

The first point of contention in the article is an erroneous suggestion that a generic, looming doom for all cryptography. Dr. Persichetti points out that the threat is actually surgical in its precision and well-understood by the math community.

“The threat from quantum computers to current public-key cryptography is well-defined and specific,” he says. He points to what Shor’s algorithm actually does, as one of the most foundational and landmark algorithms demonstrating quantum advantage.

“Shor’s algorithm solves integer factorization and the discrete logarithm problem in quantum polynomial time, breaking RSA and elliptic-curve-based schemes (ECDSA, Schnorr, etc).”

Dr. Persichetti notes that this is a precise and proven result, not a speculation.

“Crucially, Shor’s algorithm doesn’t apply to the hard problems underlying post-quantum cryptography. There is currently no known quantum algorithm that provides a meaningful speedup against these problems. The article conflates the vulnerability of classical schemes with a generic uncertainty about all cryptographic hardness assumptions, which is simply incorrect.”

“Best guesses” or decades of scrutiny?

A central claim of the critics is that the National Institute of Standards and Technology (NIST), which is the primary authority in the US responsible for vetting and standardizing the cryptographic algorithms, is merely guessing which math will hold up.

Dr. Persichetti argues this mischaracterizes the rigorous, decade-long elimination process that defines modern standards.

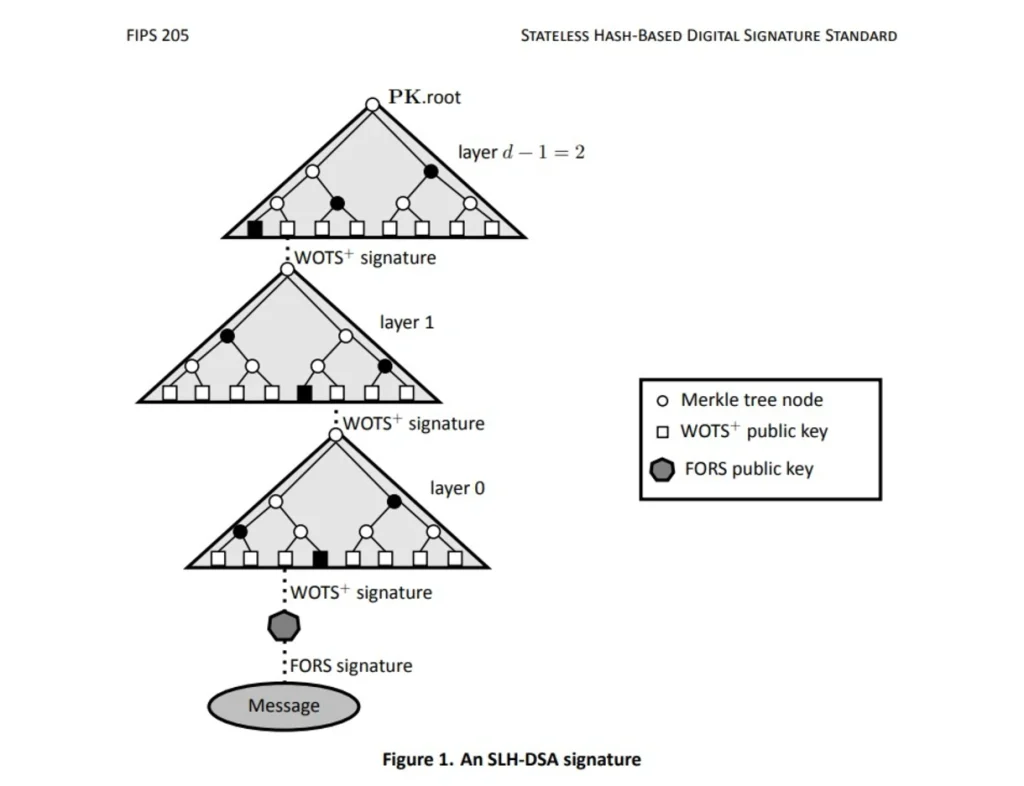

“The article describes NIST’s selected signature schemes, such as ML-DSA, FN-DSA, and SLH-DSA, as ‘best guesses.’ This is unfair and inaccurate. Unlike Rainbow or SIKE, which were based on relatively novel and insufficiently studied mathematical structures and were rightly eliminated early in the process, the lattice-based and hash-based schemes chosen by NIST have been subject to intense cryptanalytic scrutiny for well over a decade.”

Dr. Persichetti agrees that the ‘baking time’ argument (years an algorithm must spend being publicly examined by the global research community to prove its resilience) is real. However, he also points out that the schemes NIST standardized have had that baking time.

“Implying they are arbitrary guesses misrepresents the state of the art.”

On the cost of inaction

Perhaps the most dangerous suggestion in the current discourse is that we have time to wait for a perfect solution. In the world of cybersecurity, the clock has already started.

“The article’s implicit tolerance for delay is dangerous,” says Dr. Persichetti.

“Threat model is not symmetric: adversaries engaged in ‘harvest now, decrypt later’ operations are already collecting encrypted traffic today.”

He adds that migration of large-scale infrastructure takes years, sometimes decades.

“Waiting for mathematical certainty that can never fully arrive because no public-key scheme is unconditionally secure is not a rational strategy. It is an excuse for inaction.”

On pragmatism via hybrid schemes and dangers of “home-baked” schemes

Rather than choosing between the “old world” and the “new world,” Dr. Persichetti supports a “defense in depth” strategy. It allows blockchains to hedge their bets during the transition.

“The suggestion to support multiple signature types in parallel is genuinely sound and should be encouraged,” he says.

“Hybrid constructions that combine a classical scheme with a post-quantum one provide defense in depth: if either component is secure, the hybrid is secure. This is a legitimate and pragmatic transition strategy for systems like blockchain that require long consensus processes.”

Yet, in a push for smaller signatures and faster throughput, some blockchain developers are looking toward non-standardized schemes like SHRINCS. Dr. Persichetti issues a stern warning against prioritizing performance over proven security.

“This reflects a broader and worrying trend in the blockchain space: letting efficiency requirements drive security choices. Using non-standardized, experimentally proposed schemes in production because they are smaller is exactly the kind of decision that leads to broken cryptography.”

He mentions that the blockchain ecosystem, in particular, has a troubling history of prioritizing throughput over security, offering a solution.

“The correct guidance is unambiguous: use NIST-standardized schemes only. ML-DSA, FN-DSA, and SLH-DSA exist precisely so that practitioners don’t have to make these judgment calls themselves.”

Is a “better” standard coming?

Finally, for those holding out hope for a magic bullet that offers small signatures and total security, the reality is that the best available tools are already on the table.

“There is no credible roadmap for significantly ‘better’ post-quantum signature schemes beyond what is currently standardized or under active evaluation in NIST’s ongoing onramp process,” says Dr. Persichetti.

“The research community is not holding better solutions in reserve… Waiting for something better to emerge before acting is not a strategy – it’s wishful thinking.”

For the good doctor, the NIST post-quantum standards are not perfect, because no cryptographic standard ever is. But they also aren’t a shot in the dark. They are the product of rigorous, sustained, and international evaluation, addressing a well-understood and imminent threat

When it comes to the blockchain industry, the path forward isn’t second-guessing the math; it’s implementing it. As Dr. Persichetti concludes:

“Deploy NIST-standardized schemes, consider hybrid constructions as a hedge, and resist the temptation to optimize for size at the expense of security.”

Among his other projects, Dr. Persichetti will continue advising qLABS on how to implement NIST PQC standard FN-DSA into smart contracts and Layer 1 chains, in combination with zero-knowledge proofs. The goal is to ensure the protection of crypto assets in a quantum-safe vault and to offer the same technology for Layer 1 migration pathways.

How do you rate this article?

Subscribe to our YouTube channel for crypto market insights and educational videos.

Join our Socials

Briefly, clearly and without noise – get the most important crypto news and market insights first.

Most Read Today

Jim Cramer Prediction Accuracy: Full Report (2000–2026)

2Exclusive: $250M Asset Manager Breaks Down Morgan Stanley’s Bitcoin ETF Impact

3Security Warning: Bitcoin Depot Hit by Cyberattack, Millions in BTC Taken

4XRP Enters Make-or-Break Phase as CLARITY Act Advances in April

5Bitcoin Miners Dump Thousands of BTC — What’s Going on?

Latest

Also read

Similar stories you might like.